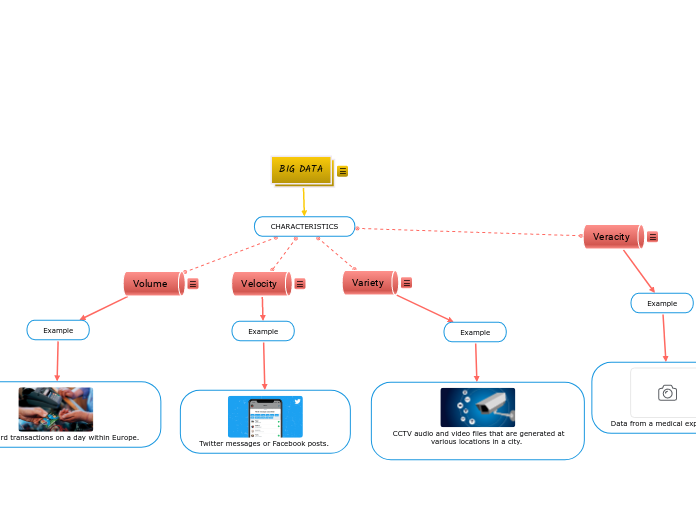

BIG DATA

CHARACTERISTICS

Veracity

Example

Data from a medical experiment

Variety

Example

CCTV audio and video files that are generated at various locations in a city.

Velocity

Example

Twitter messages or Facebook posts.

Volume

Example

credit card transactions on a day within Europe.